AI Design

Enterprise UX

Freshworks 2024

What I owned

The problem beneath the problem

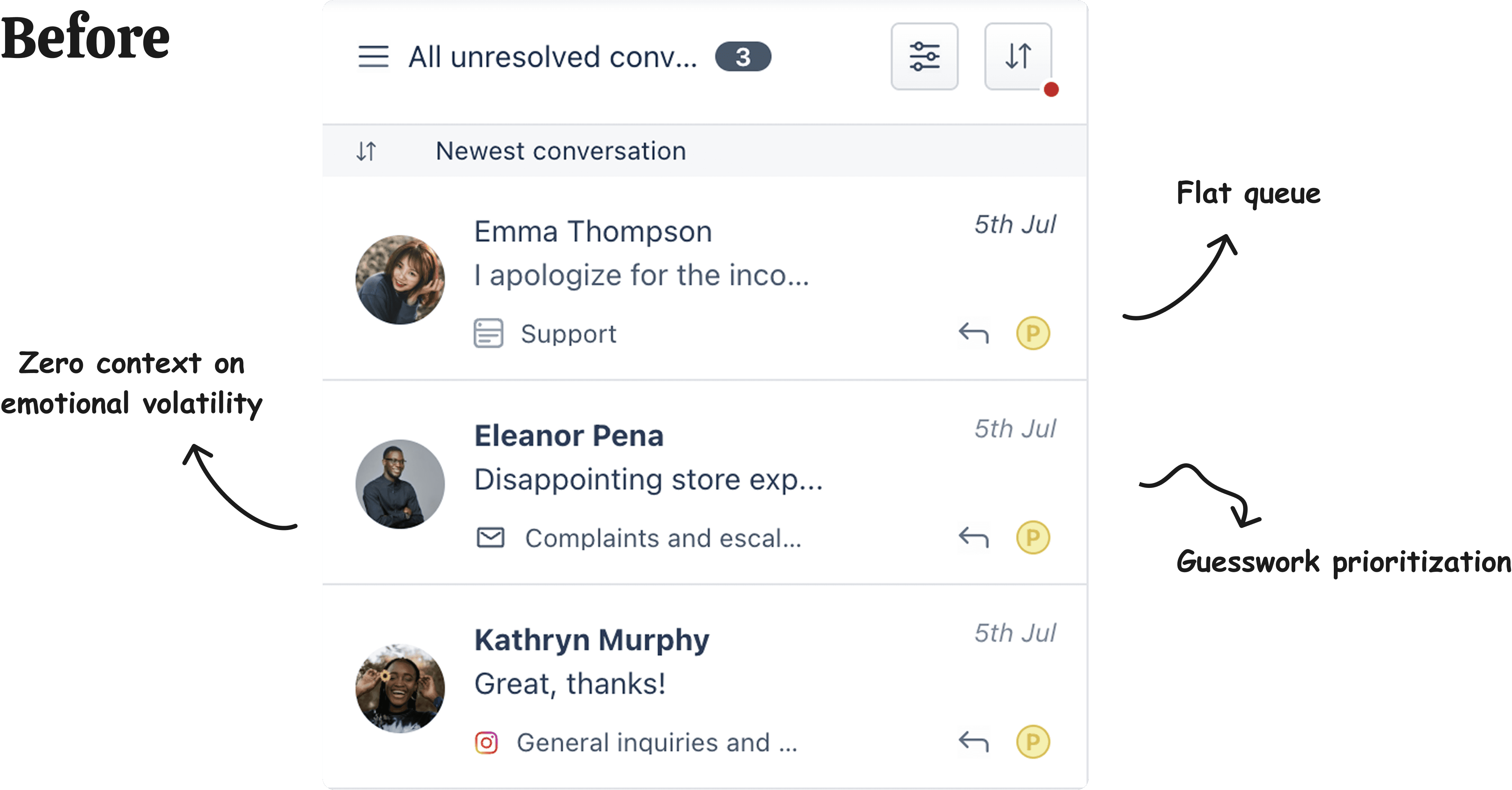

The brief was simple. Help Freshdesk and Freshchat agents prioritize upset customers faster. I spent the first week talking to agents. What I found had nothing to do with speed.

The real problem was trust. Agents didn't trust the AI. And if they don't trust it, they don't use it.

Freshworks is the first Indian SaaS company to go public on NASDAQ, building customer relationship management tools used by everyone from 5-person startups to Fortune 500 support operations. I was working across two of their flagship products: Freshdesk, a helpdesk ticketing system, and Freshchat, a live messaging platform. When I joined this project, Freshworks was in the middle of a hard pivot to become AI-first. The stakes were existential: if agents didn't trust AI features, the entire strategic direction failed.

When I talked to Freshworks' own support team, where agents handled 3 to 5 simultaneous conversations, I found that they were already fast. Speed wasn't the constraint. Trust was.

"I'd rather scan every conversation myself than act on something I don't understand."

They would rather manually triage every conversation than rely on a system making decisions they couldn't verify. So I stopped designing a prioritization tool and started designing something harder. A trust relationship between human expertise and AI assistance.

📍 The ML model was still being refined. I had to design the human layer between agents and AI predictions without knowing final model accuracy. That meant designing for uncertainty as a feature, not a problem to hide.

The decision that defined the project

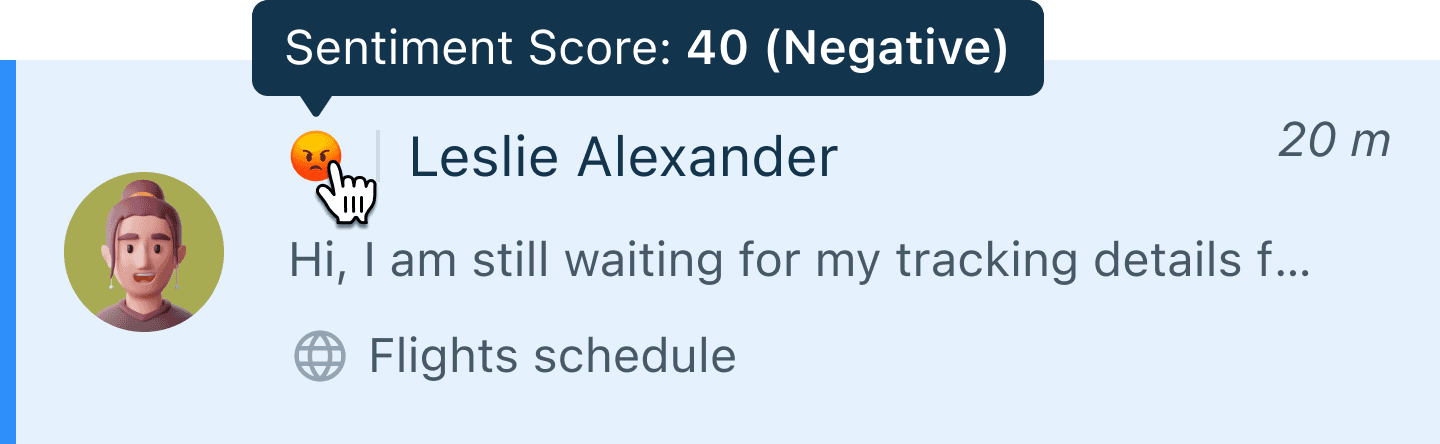

How do you visually represent sentiment to someone managing 5 conversations under pressure? Four options were on the table. Only one required zero cognitive translation.

Option | Why it failed under pressure |

|---|---|

| Requires mental translation when already stressed |

| Requires learning a new system + Visually overwhelming |

| Ambiguous about magnitude |

| Maps directly to how agents already think ✅ |

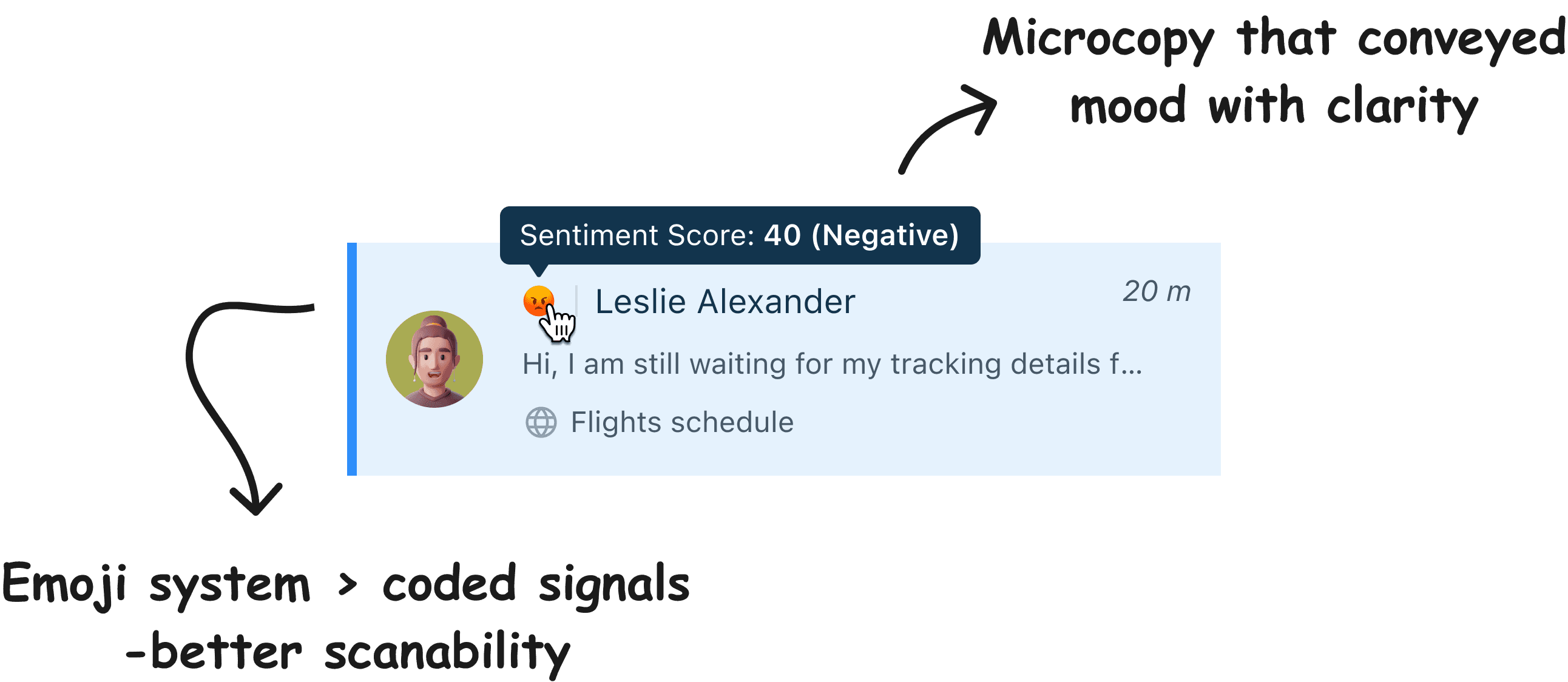

I pushed hard for emojis, because they're cognitively free. When an agent reads a customer message they don't think "0.87 negative sentiment." They think "this person is angry." So I tapped into this mental model. Zero translation, and zero additional load on someone already at capacity.

The principle: Trust isn't built through sophistication. It's built through instant comprehension.

How I designed for trust in AI through design

Make it effortless to understand

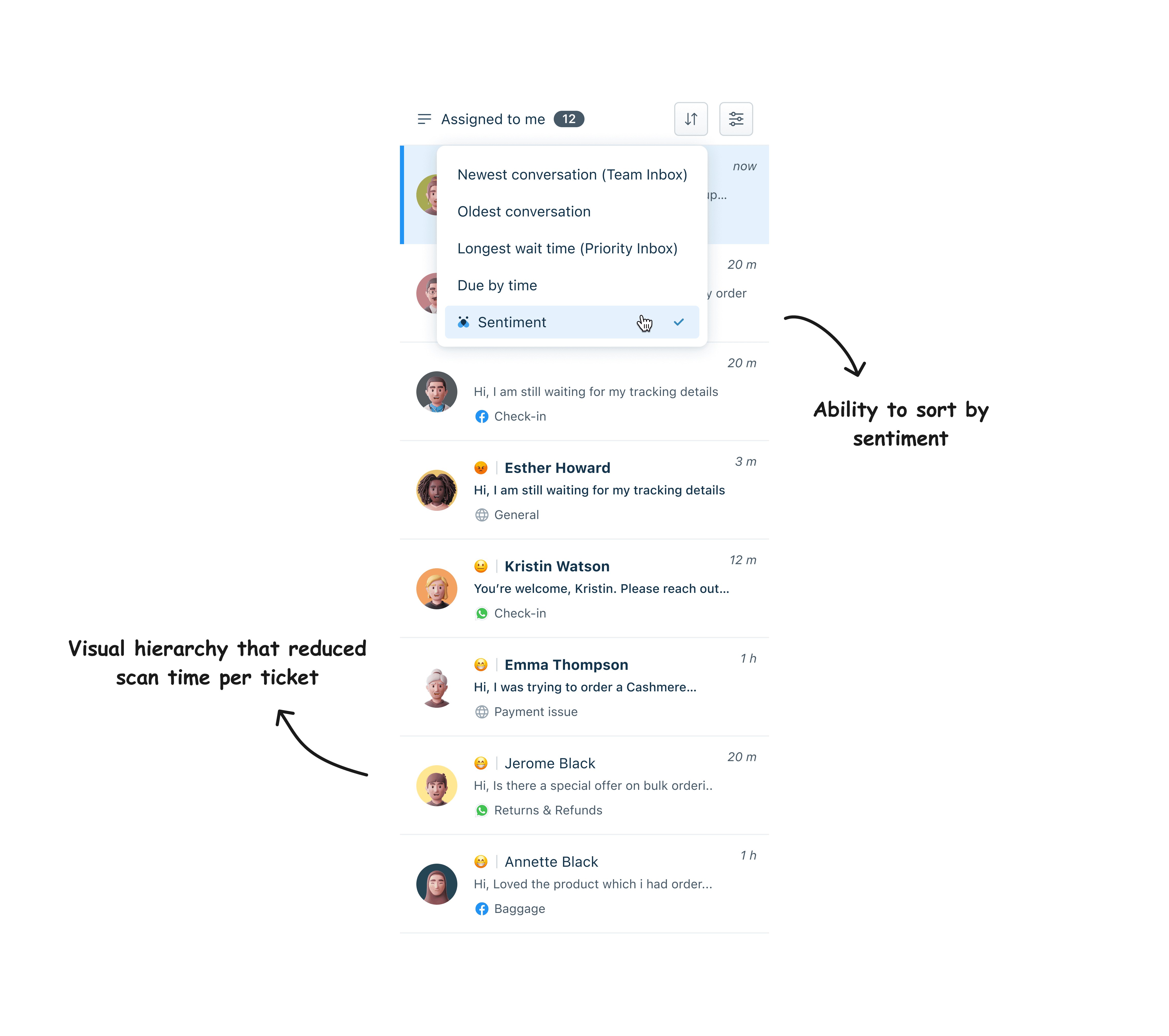

The emoji system required zero onboarding. Agents saw 😟 and immediately knew "upset customer" without decoding anything.

Keep humans in control

Agents can sort by sentiment and prioritize angry customers first. They can also override any prediction. Every override became a signal that improved the model's accuracy over time.

Design for organizational flexibility

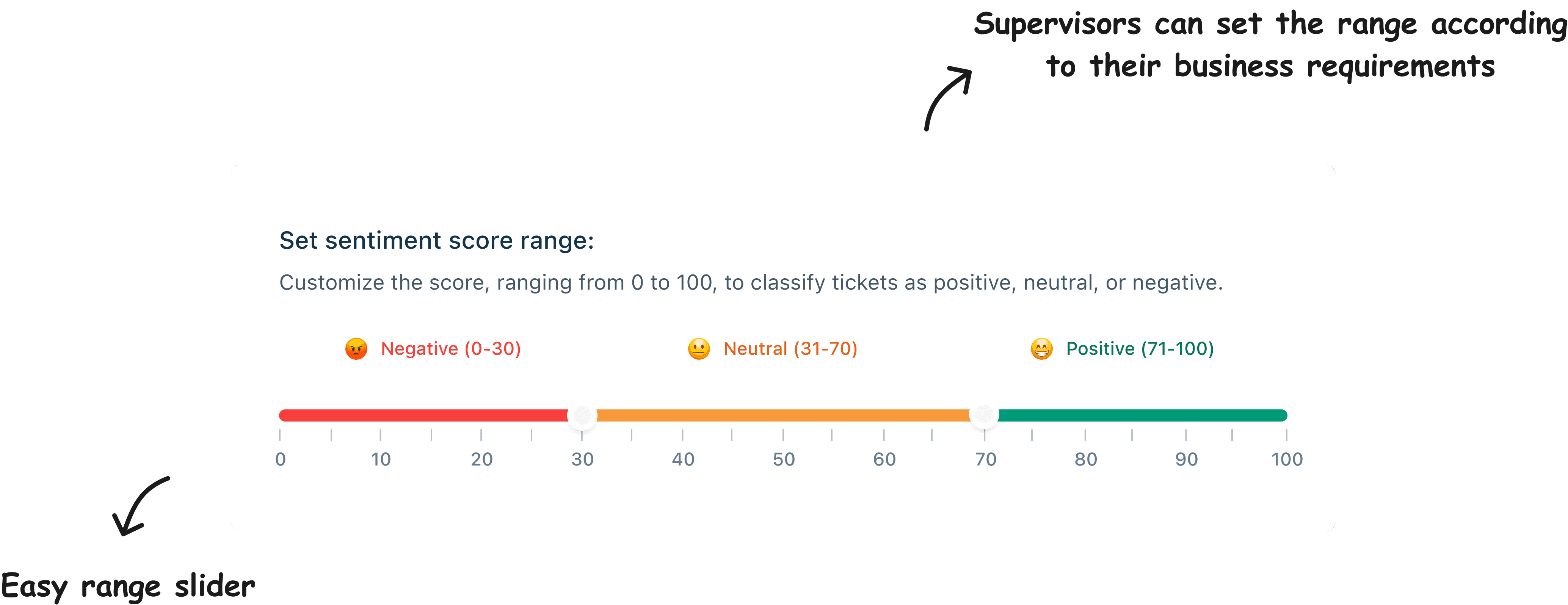

Different companies have different thresholds for what counts as negative sentiment. A B2B SaaS company tolerates frustration differently than a healthcare provider. The system let each organization define their own parameters without adding any complexity to the agent-facing interface.

What I learned, and the principles I carry forward

Agents started relying on the system in week one.

The emoji approach worked because it didn't ask agents to change how they thought. It met them exactly where they already were.

60% reduction in manual triage | 2× ticket volume handled per agent |

|---|

The outcome I'm most proud of is that agents stopped seeing the AI as something trying to replace their judgment and started seeing it as something that confirmed it. That shift in the relationship between human expertise and AI assistance was the real win.